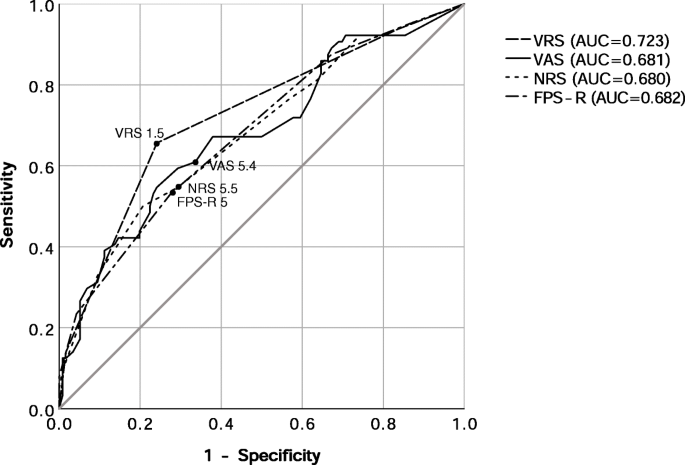

It is equivalent to prediction by tossing a coin. Higher the AUC score, better the model.ĭiagonal line represents random classification model. Sensitivity is on Y-axis and (1-Specificity) is on X-axis. False Positive Rate is also called (1-Specificity). True Positive Rate is also called Sensitivity. In this case case, purchase of credit card is event (or desired outcome) and non-purchase of credit card is non-event.ĪUC or ROC curve is a plot of the proportion of true positives (events correctly predicted to be events) versus the proportion of false positives (nonevents wrongly predicted to be events) at different probability cutoffs. Suppose you are building a predictive model for bank to identify customers who are likely to buy credit card. In simple words, it checks how well model is able to distinguish (separates) events and non-events.

It measures discrimination power of your predictive classification model. Area under Curve (AUC) or Receiver operating characteristic (ROC) curve is used to evaluate and compare the performance of binary classification model.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed